Управляемый коммутатор InfiniBand NVIDIA Quantum-2 QM9700-NS2F, 64 порта, 400G NDR, пропускная способность 51,2 Тбит/с, воздушный поток P2C

Подробная информация о продукте:

| Фирменное наименование: | Mellanox |

| Номер модели: | MQM9700-NS2F (920-9B210-00FN-0M0) |

| Документ: | MQM9700 series.pdf |

Оплата и доставка Условия:

| Количество мин заказа: | 1 шт. |

|---|---|

| Цена: | Negotiate |

| Упаковывая детали: | Внешняя коробка |

| Время доставки: | На основе инвентаризации |

| Условия оплаты: | Т/Т |

| Поставка способности: | Поставка по проекту/партии |

|

Подробная информация |

|||

| Номер модели: | MQM9700-NS2F (920-9B210-00FN-0M0) | Бренд: | Mellanox |

|---|---|---|---|

| Подключение к восходящей линии связи: | 400 Гбит / с | Имя: | Переключатель сети 1U InfiniBand MQM9700-NS2F Mellanox NDR 400Gb/S для сервера |

| Ключевое слово: | Сеть Mellanox | Конфигурация порта: | 64x 400G, 32x OSFP |

| Выделить: | Коммутатор InfiniBand NVIDIA Quantum-2,64 порта,Сетевой коммутатор Mellanox 400G NDR |

||

Характер продукции

Индустриальный стандарт NDR 400 Гбит/с на порт | Суммарная пропускная способность 51,2 Тбит/с | Встроенные вычисления SHARPv3 | Прямой воздушный поток P2C для оптимизированной тепловой конструкции | Сверхнизкая задержка для сетей ИИ и HPC

NVIDIA Quantum-2 QM9700-NS2F — это полностью управляемый коммутатор InfiniBand высотой 1U с прямым воздушным потоком от блока питания к разъему (P2C), обеспечивающий беспрецедентные 64 порта с неблокирующей полосой пропускания 400 Гбит/с (NDR). Разработанный для крупномасштабных кластеров искусственного интеллекта, научных исследований и высокопроизводительных вычислений (HPC), он обеспечивает массивную масштабируемость благодаря суммарной двунаправленной пропускной способности 51,2 Тбит/с и более 66,5 миллиардов пакетов в секунду. Используя SHARPv3, адаптивную маршрутизацию и RDMA, QM9700-NS2F ускоряет передачу данных и встроенные вычисления для самых требовательных рабочих нагрузок.

- Радиус коммутатора: 64 неблокирующих порта 400G (32 разъема OSFP)

- Пропускная способность: 51,2 Тбит/с суммарная двунаправленная

- Скорость обработки пакетов: >66,5 миллиардов пакетов в секунду (BPPS)

- SHARPv3: Ускорение ИИ в 32 раза по сравнению с предыдущим поколением

- Воздушный поток: Прямой воздушный поток от блока питания к разъему (P2C) — идеально подходит для забора холодного воздуха из холодного коридора и выброса горячего в горячий

- Управление: Встроенный менеджер подсети для до 2000 узлов, MLNX-OS, CLI, WebUI, SNMP, JSON API

- Питание и охлаждение: Резервируемые блоки питания с возможностью горячей замены 1+1, вентиляторные блоки с возможностью горячей замены, охлаждение спереди назад (P2C)

Созданная на платформе NVIDIA Quantum-2, серия QM9700 переопределяет плотность и эффективность коммутации в центрах обработки данных. QM9700-NS2F (управляемый, прямой воздушный поток P2C) объединяет 64 порта InfiniBand со скоростью 400 Гбит/с в компактном корпусе высотой 1U. Он поддерживает технологию разделения портов для обеспечения до 128 портов со скоростью 200 Гбит/с, предлагая гибкие топологии, такие как Fat Tree, DragonFly+, SlimFly и многомерный Torus. Обратная совместимость с предыдущими поколениями InfiniBand обеспечивает плавную интеграцию в существующую инфраструктуру. Благодаря расширенной телеметрии, управлению перегрузками и возможностям самовосстановления сети этот коммутатор максимизирует пропускную способность приложений, упрощая при этом эксплуатацию.

32 слота OSFP, поддерживающих 400G NDR или 128x200G через разветвительные кабели, обеспечивая самую плотную коммутацию InfiniBand на верхнем уровне стойки в форм-факторе 1U.

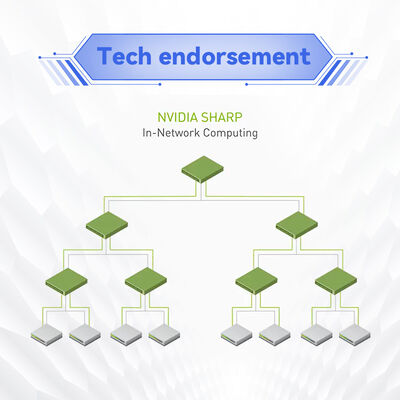

Третье поколение NVIDIA Scalable Hierarchical Aggregation and Reduction Protocol ускоряет коллективные операции ИИ до 32 раз, сокращая перемещение данных и задержку.

Удаленный прямой доступ к памяти (RDMA) и адаптивная маршрутизация устраняют узкие места, а улучшенное сопоставление виртуальных каналов и управление перегрузками обеспечивают стабильную производительность.

Автоматическое переключение при отказе, повторная передача на уровне канала и расширенные возможности мониторинга обеспечивают бесперебойную работу критически важных рабочих нагрузок.

Встроенный менеджер подсети поддерживает до 2000 узлов «из коробки»; полное управление шасси через CLI, WebUI, SNMP и API JSON/REST.

Оптимизировано для масштабируемых, экономически эффективных конструкций кластеров; удвоенный радиус действия сокращает количество сетевых уровней и снижает общую стоимость владения.

Платформа Quantum-2 интегрирует новейшую технологию NVIDIA 400G SerDes, обеспечивая коммутационную способность 51,2 Тбит/с. Ключевые инновации включают SHARPv3 — встроенные вычисления, которые снимают нагрузку коллективных операций с вычислительных узлов, значительно повышая эффективность обучения ИИ. Коммутатор использует удаленный прямой доступ к памяти (RDMA) для обхода накладных расходов ядра, достигая задержки в микросекундном диапазоне. Адаптивная маршрутизация динамически распределяет трафик по нескольким путям для предотвращения перегрузок, а улучшенное качество обслуживания (QoS) и сопоставление виртуальных каналов (VL) гарантируют пропускную способность для критически важных приложений. QM9700-NS2F также включает расширенную телеметрию для мониторинга сети в реальном времени и возможности самовосстановления, которые автоматически перенаправляют трафик в обход сбоев каналов.

- Кластеры ИИ и машинного обучения: Коммутаторы NDR с высоким радиусом действия обеспечивают массивные суперкомпьютерные кластеры GPU с SHARPv3, ускоряющим коллективные операции NCCL.

- Исследовательские центры HPC: Топологии SlimFly или Fat Tree соединяют тысячи узлов со сверхнизкой задержкой для моделирования погоды, геномики и физики.

- Корпоративные центры обработки данных: Консолидация трафика Восток-Запад с архитектурой «спина и лист» 400G, сокращение количества уровней и эксплуатационных расходов.

- Облачные и гипермасштабируемые решения: DragonFly+ и многомерный тор для максимальной масштабируемости с высокой плотностью полосы пропускания на единицу стойки.

- Расширение хранилища и ввода-вывода: Подключение высокопроизводительных систем хранения данных с использованием NVMe over Fabrics (NVMe-oF) через InfiniBand.

QM9700-NS2F полностью совместим с адаптерами NVIDIA InfiniBand (ConnectX-6, ConnectX-7, ConnectX-8), кабелями (активные/пассивные медные, активные оптоволоконные, оптические модули) и предыдущими поколениями FDR/EDR/HDR. Он работает под управлением MLNX-OS с обширной поддержкой API для фреймворков автоматизации. Совместим с NVIDIA Unified Fabric Manager (UFM) для расширенного мониторинга, предиктивной аналитики и телеметрии. Коммутатор бесшовно интегрируется с основными планировщиками HPC и инструментами автоматизации открытых сетей.

| Компонент | Поддерживаемые модели/стандарты |

|---|---|

| Адаптеры хоста | NVIDIA ConnectX-6 / ConnectX-7 / ConnectX-8 InfiniBand, адаптеры NDR 400G |

| Кабели и трансиверы | Пассивные медные OSFP (до 2,5 м), активные медные, активные оптоволоконные (до 500 м), оптические модули (адаптеры QSFP-DD к OSFP для разделения 200G) |

| Предыдущие скорости InfiniBand | HDR (200 Гбит/с), EDR (100 Гбит/с), FDR (56 Гбит/с) — обратная совместимость |

| Управление и автоматизация | MLNX-OS, UFM, Prometheus/Grafana через SNMP, JSON-RPC, модули Ansible |

| Параметр | Спецификация |

|---|---|

| Порты и скорость | 64 неблокирующих порта InfiniBand 400 Гбит/с (NDR); 32 разъема OSFP; поддерживает 128 портов @200 Гбит/с через разветвительные кабели |

| Коммутационная способность | 51,2 Тбит/с суммарная двунаправленная пропускная способность; >66,5 миллиардов пакетов в секунду (BPPS) |

| Задержка | Менее 130 нс от порта к порту с динамической маршрутизацией (типично) |

| Процессор и память | x86 Coffee Lake i3, 8 ГБ DDR4 SO-DIMM (2666 MT/с), 16 ГБ M.2 SSD |

| Интерфейсы управления | 1x USB 3.0, 1x USB (I2C), 1x RJ45 (Ethernet), 1x RJ45 (UART) |

| Источник питания | Резервируемые 1+1, с возможностью горячей замены, 200–240 В переменного тока, сертифицированы 80 PLUS Gold+ |

| Охлаждение и воздушный поток | Прямой воздушный поток от блока питания к разъему (P2C) (модель NS2F); вентиляторные блоки с возможностью горячей замены, охлаждение спереди назад |

| Размеры (ВxШxГ) | 43,6 мм (1,7 дюйма) x 438 мм (17,0 дюйма) x 660,4 мм (26,0 дюйма) |

| Вес | 14,5 кг (31,97 фунта) |

| Условия эксплуатации | Температура: от 0°C до 40°C; Влажность: от 10% до 85% без конденсации; Высота до 3050 м |

| Нормативные требования и безопасность | RoHS, CE, FCC, VCCI, cTUVus, CB, RCM, ENERGY STAR |

| Гарантия | 1 год гарантии производителя (с возможностью продления) |

| Заказываемый номер детали | Описание | Управление | Направление воздушного потока |

|---|---|---|---|

| MQM9700-NS2F | 64 порта 400 Гбит/с InfiniBand, управляемый коммутатор, 32 порта OSFP | Полный встроенный менеджер подсети, MLNX-OS | От блока питания к разъему (P2C) — прямой воздушный поток |

| MQM9700-NS2R | 64 порта 400 Гбит/с InfiniBand, управляемый коммутатор | Управляемый (те же функции) | От разъема к блоку питания (C2P) — обратный воздушный поток |

| MQM9790-NS2F | 64 порта 400 Гбит/с, неуправляемый коммутатор | Неуправляемый, внешнее управление UFM | Прямой воздушный поток P2C |

| MQM9790-NS2R | 64 порта 400 Гбит/с, неуправляемый коммутатор | Неуправляемый | Обратный воздушный поток C2P |

QM9700-NS2F идеально подходит для клиентов, которым требуется расширенное встроенное управление (менеджер подсети) и прямой воздушный поток от блока питания к разъему (P2C). Этот поток воздуха забирает холодный воздух из холодного коридора через сторону питания и выбрасывает его через сторону разъема, что соответствует стандартным конструкциям охлаждения спереди назад. Перед заказом проверьте стратегию воздушного потока в вашем стойке.

Склады и партнеры по выполнению заказов обеспечивают быструю доставку по всему миру с безопасной упаковкой.

Наши штатные инженеры помогают проверить сетевые топологии, планы кабелей и требования к прошивке.

Цены авторизованного партнера с расширенными гарантийными опциями и программами ускоренной замены.

Выделенная поддержка на английском, китайском (мандаринский, кантонский) и региональных языках для беспрепятственных закупок.

Starsurge предоставляет комплексную поддержку на протяжении всего жизненного цикла: от консультаций по проектированию до развертывания и послепродажного обслуживания. Наши услуги включают руководство по установке на месте, обработку RMA, помощь в обновлении прошивки и индивидуальные решения по кабелям. Для крупномасштабных проектов мы предлагаем выделенное управление учетными записями и круглосуточную техническую эскалацию. QM9700-NS2F поставляется с 1-летней гарантией на оборудование; расширенные пакеты поддержки доступны по запросу.

Основное отличие — направление воздушного потока: NS2F использует прямой воздушный поток от блока питания к разъему (P2C) (забор холодного воздуха со стороны питания, выброс через сторону разъема), а NS2R использует обратный воздушный поток от разъема к блоку питания (C2P). Выбирайте в зависимости от расположения горячего/холодного коридора вашего центра обработки данных.

Да, QM9700 поддерживает обратную совместимость с использованием соответствующих адаптерных кабелей или опций разветвления для скоростей HDR (200G), EDR (100G) и FDR (56G). Пожалуйста, ознакомьтесь с матрицей совместимости или свяжитесь со Starsurge для получения информации о проверенных артикулах кабелей.

Нет, QM9700-NS2F оснащен интегрированным встроенным менеджером подсети, способным управлять до 2000 узлов, что упрощает развертывание малого и среднего масштаба. Для более крупных сетей можно использовать внешний SM или UFM.

Да, сеть InfiniBand не зависит от производителя сервера. Любой сервер с поддерживаемым адаптером InfiniBand HCA (например, серии ConnectX) может быть беспрепятственно подключен.

Типичное энергопотребление зависит от конфигурации портов и кабелей. Блоки питания резервируемые 1+1 на 200–240 В переменного тока, сертифицированы Gold+. Свяжитесь с нами для получения подробного плана энергопотребления на основе вашего развертывания.

- Убедитесь, что направление воздушного потока (P2C вперед для NS2F) соответствует стратегии вентиляции вашего стойки — эта модель забирает воздух со стороны питания и выбрасывает его через сторону разъема OSFP.

- Используйте только одобренные модули NVIDIA OSFP или квалифицированные пассивные/активные медные/оптоволоконные кабели для производительности 400G.

- Установка должна выполняться с соблюдением мер предосторожности от электростатического разряда и квалифицированным сетевым персоналом.

- Обновление прошивки должно выполняться в соответствии с рекомендациями MLNX-OS во избежание прерываний; планируйте окна обслуживания соответствующим образом.

- Убедитесь, что входное напряжение находится в диапазоне 200–240 В переменного тока с резервными линиями для поддержания высокой доступности.

Основанная в 2008 году, Starsurge является технологически ориентированным поставщиком сетевого оборудования, ИТ-услуг и решений по системной интеграции. Мы обслуживаем государственные, медицинские, производственные, образовательные, финансовые и корпоративные секторы по всему миру. Имея опытную команду продаж и технических специалистов, мы поставляем надежное сетевое оборудование, включая коммутаторы, сетевые карты, беспроводные контроллеры, кабели и решения для Интернета вещей. Наш клиентоориентированный подход обеспечивает масштабируемую, эффективную и перспективную инфраструктуру. Многоязычная поддержка и возможности глобальной доставки делают Starsurge вашим надежным партнером для решений NVIDIA и центров обработки данных.

- ☑ Подтвердите направление воздушного потока (P2C вперед) в соответствии с конструкцией забора холодного воздуха вашего центра обработки данных

- ☑ Проверьте входное напряжение: 200–240 В переменного тока с резервными линиями и достаточной мощностью цепи

- ☑ Выберите соответствующие кабели/трансиверы OSFP: активные/пассивные медные или оптоволоконные, в зависимости от требований к расстоянию

- ☑ Спланируйте сетевую топологию (Fat Tree, DragonFly+ и т. д.) и количество масштабируемых узлов

- ☑ Убедитесь, что адаптеры хоста поддерживают NDR (ConnectX-7 или новее для 400G) или совместимы с более низкими скоростями

- ☑ Выделите IP-адрес управления и просмотрите конфигурацию MLNX-OS (дополнительное лицензирование для основного коммутационного функционала не требуется)

- ☑ Подготовьте место в стойке: высота 1U, глубина до 660 мм, включая управление кабелями и силовые кабели