NVIDIA Quantum-2 MQM9700-NS2R 64-порт 400Gb/s Управляемый InfiniBand Switch с обратным воздушным потоком (C2P)

Подробная информация о продукте:

| Фирменное наименование: | Mellanox |

| Номер модели: | MQM9700-NS2F (920-9B210-00FN-0M0) |

| Документ: | MQM9700 series.pdf |

Оплата и доставка Условия:

| Количество мин заказа: | 1 шт. |

|---|---|

| Цена: | Negotiate |

| Упаковывая детали: | Внешняя коробка |

| Время доставки: | На основе инвентаризации |

| Условия оплаты: | Т/Т |

| Поставка способности: | Поставка по проекту/партии |

|

Подробная информация |

|||

| Номер модели: | MQM9700-NS2F (920-9B210-00FN-0M0) | Бренд: | Mellanox |

|---|---|---|---|

| Подключение к восходящей линии связи: | 400 Гбит / с | Имя: | Переключатель сети 1U InfiniBand MQM9700-NS2F Mellanox NDR 400Gb/S для сервера |

| Ключевое слово: | Сеть Mellanox | Конфигурация порта: | 64x 400G, 32x OSFP |

| Выделить: | Коммутатор InfiniBand NVIDIA Quantum-2,Управляемый коммутатор сети 400 Гбит/с,64-портный коммутатор InfiniBand с обратным воздушным потоком |

||

Характер продукции

NVIDIA Quantum-2 MQM9700-NS2R 64-портный 400 Гбит/с управляемый коммутатор InfiniBand

Полностью управляемый коммутатор NDR с обратным воздушным потоком (соединитель к питанию) и интегрированным управлением подсетью.предоставление 64 неблокирующих портов InfiniBand 400Gb/s в компактном 1U шасси.

Ключевые особенности

- 64 порта 400 Гбит/с NDR InfiniBand в 1U неблокирующей архитектуре

- Мод двойной плотности 200 Гбит/с, поддерживающий до 128 портов посредством технологии разделения портов

- Интегрированный менеджер подсетей до 2000 узлов

- SHARPv3 в сети с 32x более высоким ускорением ИИ

- Обратный воздушный поток (C2P) для центров обработки данных, требующих охлаждения сзади вперед

- 1+1 избыточные горячезаменяемые источники питания и вентиляторы

- Обратная совместимость с поколениями HDR, EDR и FDR InfiniBand

64

Порты 400 Гбит/с

51.2 Тб/с

Пропускная способность бисекции

1U

Высота стойки

SHARPv3

Ускорение

C2P

Обратный воздушный поток

2000

Управляемые узлы

Технические спецификации

| Порты | 32 разъема OSFP, поддерживающие 64 порта 400 Гбит/с InfiniBand (NDR) или 128 портов 200 Гбит/с |

| Совокупная производительность | 51.2 Tb/s двунаправленный, не блокирующий |

| Способность пересылки пакетов | Более 66,5 миллиардов пакетов в секунду (BPPS) |

| Управление | Полностью управляется с помощью на борту менеджера подсети (поддерживает до 2000 узлов) |

| Силовое питание | 1 + 1 избыточный, горяче-заменяемый, 200-240Vac, 80 плюс золото |

| Охлаждение / воздушный поток | Обратный воздушный поток: соединители для питания (C2P), фанатные установки, подлежащие перемене на горячем уровне |

| Размеры | 1.7 дюймов (43,6 мм) х 17,0 дюймов (438 мм) х 26,0 дюймов (660,4 мм) |

| Вес | Примерно 14,5 кг. |

| Операционная температура | от 0°C до 40°C |

Типичные задания

- Кластеры ИИ и машинного обучения для обучения и вывода больших языковых моделей

- Симуляции и моделирование исследований высокопроизводительных вычислений (HPC)

- Гипермасштабные дата-центры с архитектурой позвоночника

- Enterprise Cloud & Finance для торговых систем с низкой задержкой

- Государственные и образовательные суперкомпьютерные центры

Совместимость

Полностью совместим с экосистемой NVIDIA InfiniBand, включая адаптеры ConnectX-6/7, кабели LinkX и коммутаторы серии Quantum-2.Поддерживает основные дистрибутивы Linux и Windows Server.

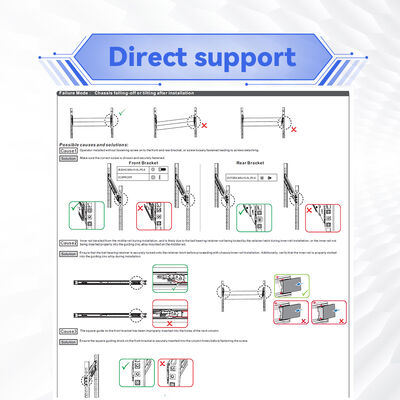

Контрольный список покупателя

- Проверьте требуемое направление воздушного потока: C2P совпадает с размещением холодного прохода вашего стеллажа

- Управляющий подсетью готовый до 2000 узлов

- Планирование кабеля: 400G OSFP на OSFP или разделение на 2x200G

- Подтвердить входную мощность: 200-240Vac с избыточным питанием

- Для больших развертываний запросить пересмотр дизайна топологии

Важные уведомления:Убедитесь, что ориентация воздушного потока C2P соответствует конфигурации вашей стойки. Используйте только оптические модули и кабели, сертифицированные NVIDIA. Рабочая высота до 3050 м; температура не должна превышать 40 ° C.

Хотите узнать больше подробностей об этом продукте