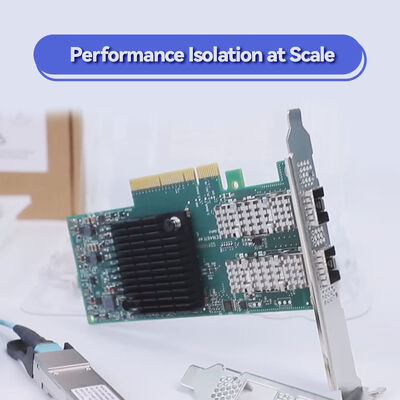

NVIDIA mellanox ConnectX-6 MCX653106A-ECAT 100Gb/s Двухпортовый адаптер InfiniBand Ethernet

Подробная информация о продукте:

| Фирменное наименование: | Mellanox |

| Номер модели: | MCX653106A-ECAT |

| Документ: | connectx-6-infiniband.pdf |

Оплата и доставка Условия:

| Количество мин заказа: | 1 шт. |

|---|---|

| Цена: | Negotiate |

| Упаковывая детали: | Внешняя коробка |

| Время доставки: | На основе инвентаризации |

| Условия оплаты: | Т/Т |

| Поставка способности: | Поставка по проекту/партии |

|

Подробная информация |

|||

| Статус продуктов: | Запас | Приложение: | Сервер |

|---|---|---|---|

| Тип интерфейса:: | Infiniband | Порты: | Двойной |

| Максимальная скорость: | 100GBE | Тип: | Проводной |

| Состояние: | Новое и оригинальное | Гарантийный срок: | 1 год |

| Модель: | MCX653106A-ECAT | Имя: | Порт Nic ConnectX- 6 VPI Hdr100 Edr Ib MCX653106A-ECAT Mellanox 100gb двойной |

| Ключевое слово: | Сетевая карта Mellanox | ||

| Выделить: | Сетевой адаптер Mellanox ConnectX-6,Карта Ethernet InfiniBand 100 Гбит/с,сетевая карта Mellanox с двумя портами |

||

Характер продукции

Универсальная двухпортовая карта адаптера InfiniBand и Ethernet 100Gb/s с интерфейсом PCIe 3.0/4.0 x16, обеспечивающая RDMA, NVMe-oF, шифрование на уровне блоков,и вычисления в сети для оптимизированных затрат HPC, корпоративных и облачных развертываний.

- Двухпортовая подключенность InfiniBand 100Gb/s (EDR/HDR100) и 100/50/40/25/10GbE

- PCIe Gen 3.0/4.0 x16 (совместим с прошлой версией)

- Очищение аппаратного обеспечения: NVMe-oF цель/инициатор, XTS-AES 256/512-битная шифровка, совпадение меток MPI

- Поддержка NVIDIA In-Network Computing и GPUDirect RDMA

- Низкопрофильный форм-фактор PCIe, соответствующий требованиям RoHS

- Пропускная способность 100 Гбит/с:двойные порты, работающие на скорости до 100 Гбит/с InfiniBand (EDR/HDR100) или Ethernet с полной двунаправленной полосой пропускания.

- Компьютеры в сети:Отгружает коллективные операции (MPI, NCCL, SHMEM) с использованием технологии NVIDIA SHARP.

- Шифрование на уровне блока:аппаратное обеспечение AES-XTS для 256/512-битного шифрования/дешифрования без затрат на процессор; совместимо с FIPS.

- NVMe-oF отгрузки:Цель и инициатор разгружаются для NVMe через Fabrics, уменьшая использование процессора.

- Расширенная виртуализация:SR-IOV до 1K VF, ASAP2 ускорение для OVS и виртуального переключения.

MCX653106A-ECAT интегрируетСетевые вычисления NVIDIAдвигатели (SHARP),RDMA (IBTA 1.3),RoCE, иNVMe-oFОн поддерживаетPCIe Gen 4.0 (x16) и Gen 3.0,PAM4 и NRZ SerDes, и расширенные функции, такие какДинамически связанный транспорт (DCT),По запросу (ODP), иАдаптивный маршрутизатор. Накладывание нагрузок для VXLAN, NVGRE, Geneve ускоряется аппаратным способом. Соответствует спецификациям IEEE 802.3bj, 802.3bm, 802.3by и InfiniBand Trade Association.

ConnectX-6 отгружает задачи связи и хранения с хост-CPU на аппаратное обеспечение адаптера.Для хранения, команды NVMe-oF обрабатываются непосредственно на адаптере, освобождая ядра процессора.более низкая задержка, более высокая скорость передачи сообщений (215 Мпп) и улучшенная масштабируемость приложенийДаже при скорости 100 Гбит/с.

- Кластеры высокопроизводительных процессоров среднего диапазона:Симуляции на основе MPI, требующие экономически эффективной взаимосвязи 100 Гбит/с.

- ИИ вывод и обучение:Кластеры GPU с коллективами GPUDirect RDMA и NCCL.

- Хранение NVMe-oF:Цель/инициатор разгрузки для высокопроизводительного доступа к хранилищу NVMe.

- Виртуализированные центры обработки данныхSR-IOV и ASAP2 для OVS-разгрузки в NFV и облаке.

- Облако предприятия:100 Гбит Ethernet для виртуализации и конвергенции хранилищ.

| Модель | Порты и скорость | Интерфейс хоста | Форма фактора | Шифрование | Протоколы | ОПН |

|---|---|---|---|---|---|---|

| ConnectX-6 | 2x QSFP56 (100 Гбит/с IB/Eth) | PCIe 3.0/4.0 x16 | PCIe stand-up (низкий профиль) | AES-XTS 256/512 бит | InfiniBand, Ethernet, NVMe-oF | MCX653106A-ECAT |

| ConnectX-6 | 1x QSFP56 (100 Гбит/с) | PCIe 4.0 x8 | PCIe в режиме стояния | AES-XTS | IB/Eth | MCX651105A-EDAT |

| ConnectX-6 | 2x QSFP56 (200 Гбит/с) | PCIe 4.0 x16 | PCIe в режиме стояния | AES-XTS | IB/Eth | MCX653106A-HDAT |

Примечание: MCX653106A-ECAT поддерживает 100Gb/s InfiniBand (EDR/HDR100) и 100/50/25/10GbE. Размеры: 167.65mm x 68.90mm (без скобки).Расход энергии < 15 Вт.

- против ConnectX-5:Удвоение пропускной способности (100 Гбит/с против 50 Гбит/с), интегрированный SHARP для вычислений в сети и шифрование на уровне блоков без дополнительных затрат.

- В сравнении с конкурентами:Истинная разгрузка оборудования для коллективов NVMe-oF и MPI, а не просто безгражданская разгрузка.

- Стоимость 100G:Идеально подходит для баланса производительности и бюджета в кластерах среднего размера.

- Соответствие FIPS:Аппаратное шифрование соответствует правительственным стандартам безопасности.

Мы предоставляем круглосуточные технические консультации, услуги RMA и поддержку интеграции для адаптеров ConnectX-6. Каждая карта имеет 1-летнюю гарантию.Наша команда обеспечивает проверку драйверов для основных дистрибутивов LinuxПоддержка предпродажной конфигурации для проектирования InfiniBand/Ethernet ткани доступна.

Вопрос: совместим ли MCX653106A-ECAT с квантовыми коммутаторами скоростью 200 Гбит/с?

А:Да, он совместим с коммутаторами NVIDIA Quantum QM8700/QM8790 при использовании режима HDR100 (100 Гбит/с на порт).

Вопрос: Может ли этот адаптер использоваться как для Ethernet, так и для InfiniBand?

А:Да, он поддерживает как протоколы InfiniBand, так и Ethernet.

Вопрос: Поддерживает ли он RoCE (RDMA over Converged Ethernet)?

А:Да, ConnectX-6 полностью поддерживает RoCE, обеспечивая низкую задержку RDMA в среде Ethernet.

Вопрос: Какова максимальная скорость передачи сообщений?

А:Адаптер доставляет до 215 миллионов сообщений в секунду, идеально подходит для небольших пакетов HPC.

Вопрос: Совместима ли карта со слотами PCIe Gen 3.0?

А:Да, он полностью совместим со слотами PCIe Gen 3.0 x16; производительность будет ограничена ~ 100 Гбит/с в совокупности, что соответствует скорости порта.

- Требование слота PCIe:Для оптимальной производительности устанавливается в слоте PCIe Gen 3.0 x16 или Gen 4.0 x8/x16.

- Охлаждение:Обеспечить адекватный воздушный поток в шасси сервера; пассивное охлаждение требует минимум 200 LFM.

- Проводка:Использовать QSFP56 пассивные/активные медные или оптические модули, рассчитанные на 100 Гбит/с (EDR/HDR100).

- Поддержка водителя:Используйте новейшие NVIDIA MLNX_OFED для Linux или WinOF-2 для Windows.

- Рабочая температура:0°C - 70°C; хранить от -40°C до 85°C.

С более чем десятилетним опытом работы мы управляем крупной фабрикой, которую поддерживает сильная техническая команда.Наша обширная клиентская база и опыт в области позволяют нам предлагать конкурентоспособные цены без ущерба для качестваКак авторизованные дистрибьюторы Mellanox, Ruckus, Aruba и Extreme, мы имеем оригинальные сетевые коммутаторы, решения для сетевых карт, беспроводные точки доступа, контроллеры и кабели.Мы поддерживаем запасы в 10 миллионов долларов США, чтобы обеспечить быстрое выполнение различных линий продуктов.Каждая поставка проверяется на точность, и мы предоставляем круглосуточную консультацию и техническую поддержку.Наши профессиональные команды по продажам и технике заслужили высокую репутацию на мировых рынках..